The Hidden Risk in Your AI Scribe

AI scribes are rapidly transforming clinical workflows across Australia and globally. From reducing administrative burden to improving documentation efficiency, the promise of AI medical scribing is compelling.

But beneath the surface lies a critical question that many healthcare leaders overlook:

“Is your AI scribe safe enough for patient data?”

Not all medical AI scribes are built the same. The architecture behind the system -whether open-source or closed-source can significantly impact privacy, accuracy, and clinical reliability.

For doctors, practice owners, and hospital decision-makers, this is not just a technical distinction. It is a governance, compliance, and risk management issue.

What is a Medical AI Scribe?

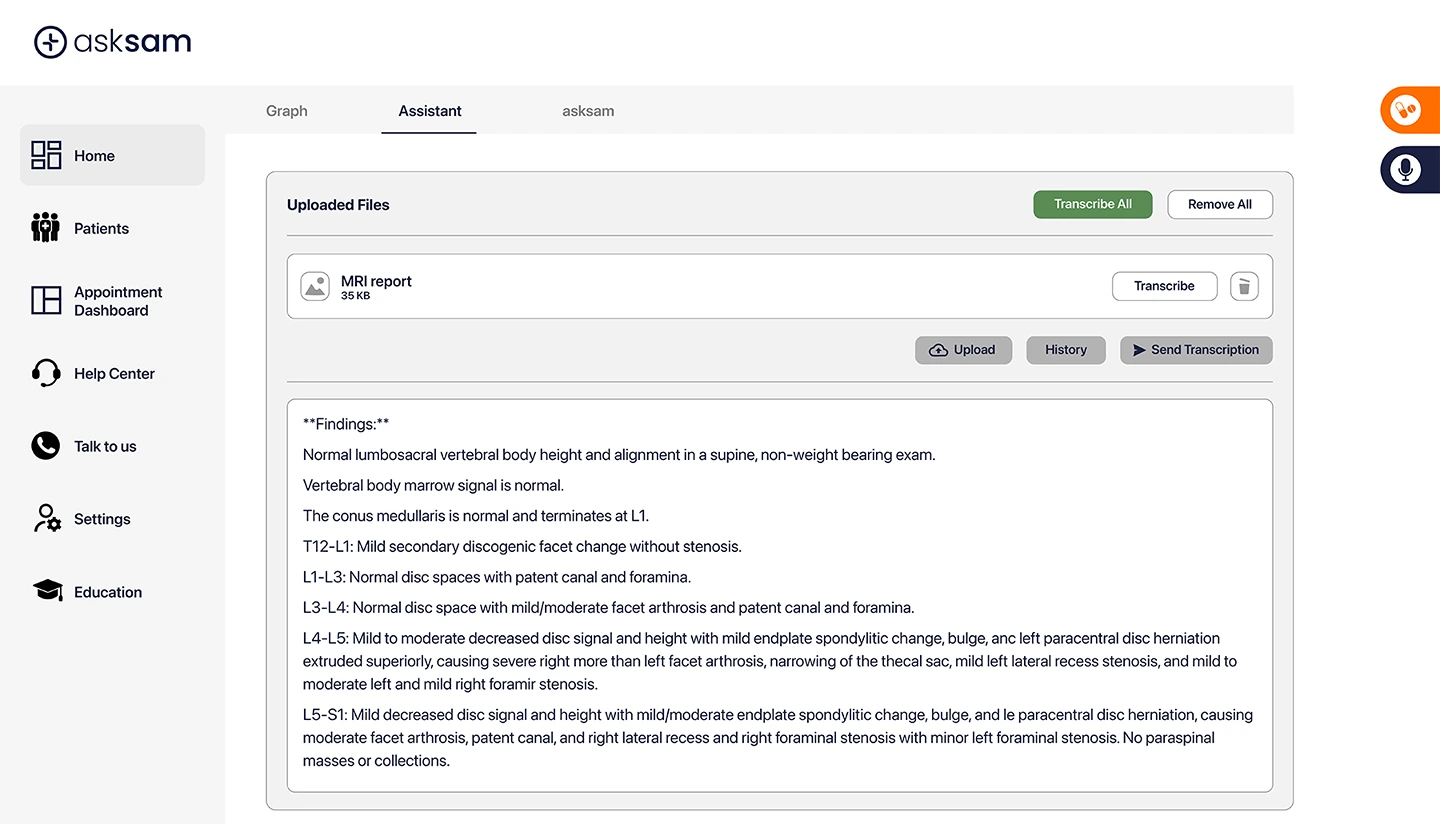

An AI medical scribe software solution listens to clinician–patient interactions and converts them into structured clinical notes, letters, and documentation.

Modern systems go beyond transcription and support:

• AI clinical documentation

• Referral and letter generation

• Patient summaries

• Workflow automation

In Australia, demand for medical ai scribes in Australia is rising as clinicians seek to reduce burnout and streamline documentation.

Open vs Closed Source AI Scribes: What’s the Difference?

Open-Source AI Scribes

Open-source systems typically rely on publicly available large language models (LLMs) or APIs. These models are:

• Broadly trained on internet-scale data

• Flexible and customisable

• Often dependent on third-party infrastructure

However, this flexibility comes with trade-offs.

Closed-Source AI Scribes

Closed-source systems like asksam™ are proprietary platforms built specifically for healthcare environments. They feature:

• Controlled data environments often with medically verified literature

• Domain-specific medical training

• Strict security and compliance frameworks

For clinical use, this distinction is critical.

The Risks of Open-Source Medical AI in Healthcare

1. Patient Data Privacy and Security Risks

Open-source models often require data to be processed externally or through APIs.

This creates potential risks such as:

• Data leaving Australian jurisdiction

• Exposure to third-party systems

• Unclear data retention policies

As highlighted in comparative analysis, open-source systems may rely on external infrastructure where data handling depends on deployment and configuration, increasing compliance complexity.

For healthcare providers, this raises serious concerns under:

• Australian Privacy Principles (APP)

• HIPAA and international frameworks

• Institutional governance policies

2. Hallucination Risk and Clinical Accuracy

General-purpose open-source LLMs are not inherently designed for healthcare.

This can lead to:

• Fabricated or “hallucinated” clinical details

• Incomplete or incorrect documentation

• Inconsistent outputs across similar cases

Comparatively, open-model-based systems have been shown to carry moderate hallucination risk, particularly without strict clinical guardrails.1

In a clinical setting, even small inaccuracies in clinical documentation AI outputs can create downstream risks in:

• Patient records

• Referrals

• Treatment continuity

3. Lack of Clinical Guardrails

Open-source AI tools are often:

• General-purpose rather than healthcare-specific

• Dependent on prompt engineering

• Lacking embedded clinical validation layers

This places a greater burden on clinicians to verify outputs, reducing efficiency gains.

Why Closed-Source AI Scribes Are Better for Healthcare

Closed-source systems are designed with clinical risk, privacy, and reliability at the core.

1. Data Stays Secure and Controlled

Closed systems ensure:

• No external data sharing

• Full control over infrastructure

• Compliance with Australian and global privacy standards

For example, closed-source platforms can ensure patient data remains within secure environments, with no exposure to external AI training systems.

2. Reduced Hallucination Risk

Closed-source AI uses:

• Verified medical datasets

• Structured workflows

• Domain-specific training

This significantly reduces the likelihood of incorrect outputs and improves trust AI scribes for doctors.

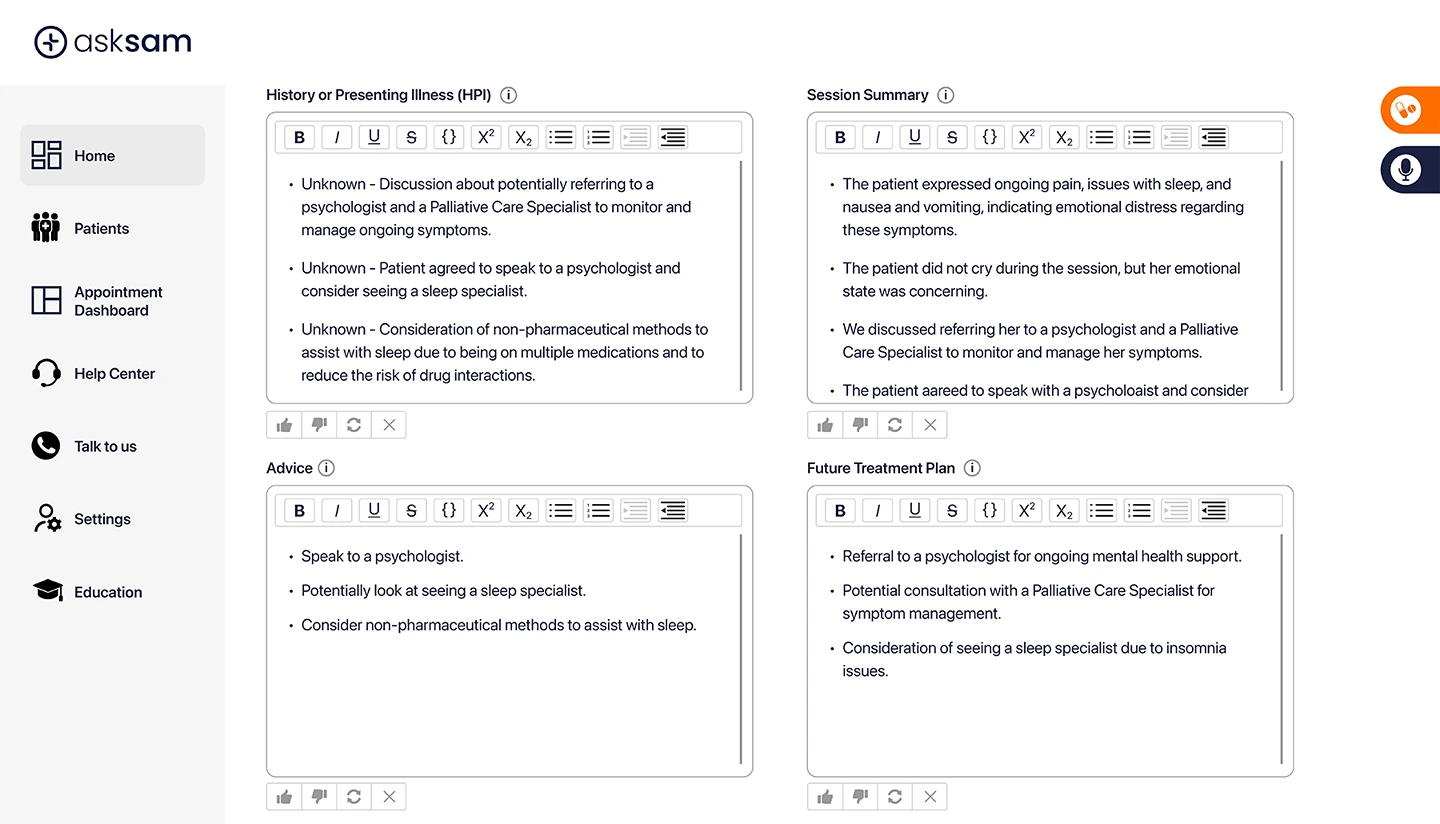

3. Built for Clinical Workflows, Not Just Text Generation

Unlike generic tools, closed systems are purpose-built for:

• End-to-end AI clinical documentation

• Structured patient histories

• Referral and letter automation

This enables a more complete workflow solution rather than just a scribe for documentation functionality.

Real-World Use Case: Open vs Closed in Practice

Scenario: GP Consultation

A general practitioner uses an AI scribe during a 15-minute consultation.

With an open-source system:

• Notes are generated but may require heavy editing due to hallucination

• Patient information must be de-identified prior to processing to protect patient security

• Outputs from the LLM will be population based rather than patient specific due to deidentification

With a closed-source system:

• Patient data is protected within the closed source architecture

• Responses are patient specific

• Outputs are more consistent and clinically aligned

The difference is not just efficiency—it is risk exposure vs clinical confidence.

Why asksam™ is the Preferred Closed-Source Medical AI Scribe

asksam™ is designed specifically for healthcare environments, combining security, accuracy, and workflow intelligence.

Closed-Source Architecture Matters

asksam™ operates in a fully closed environment, meaning:

• No reliance on open internet data

• No external data sharing

• Access only to verified medical sources

This significantly reduces both privacy risk and hallucination risk, providing clinicians with greater confidence in outputs.

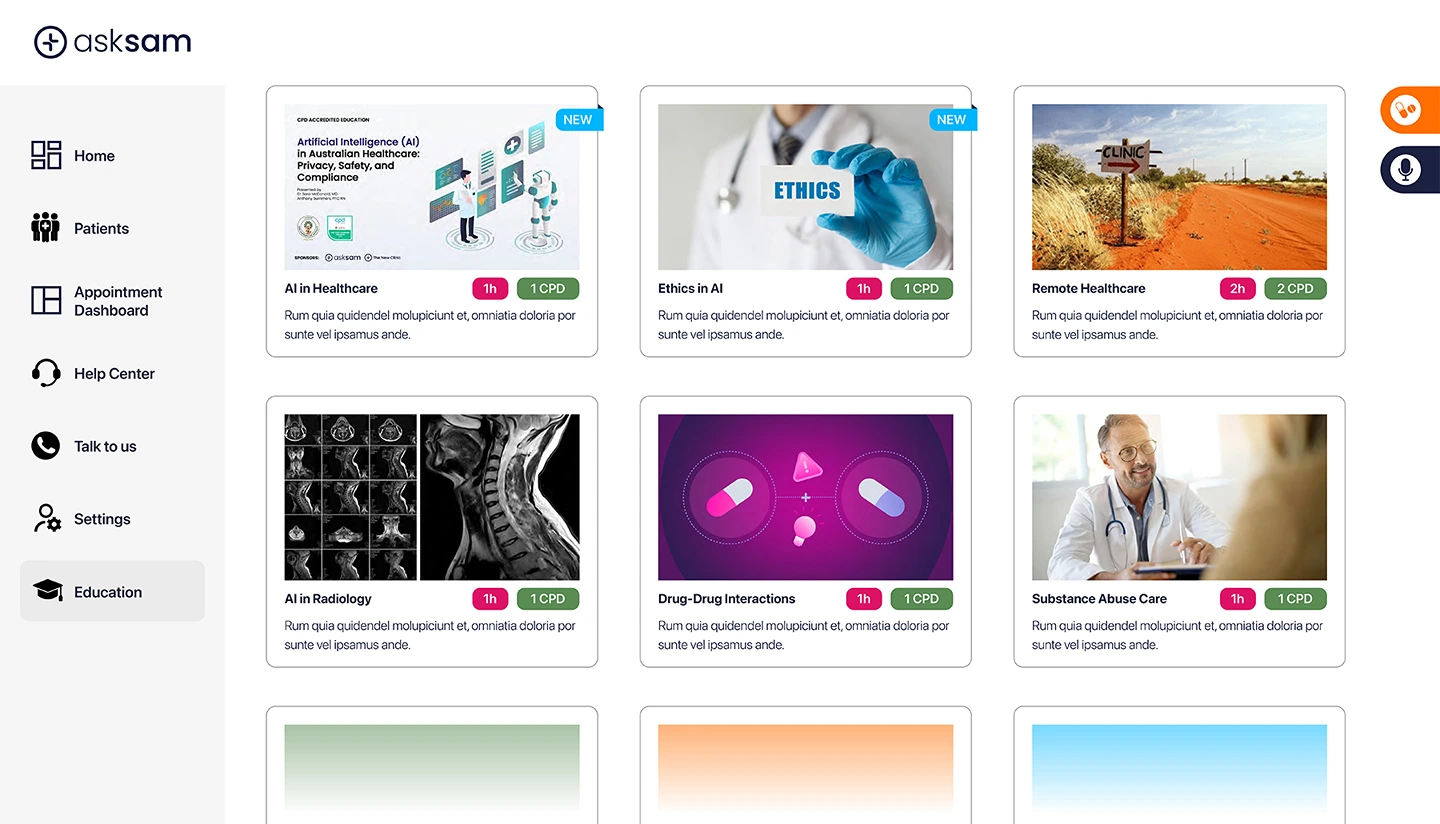

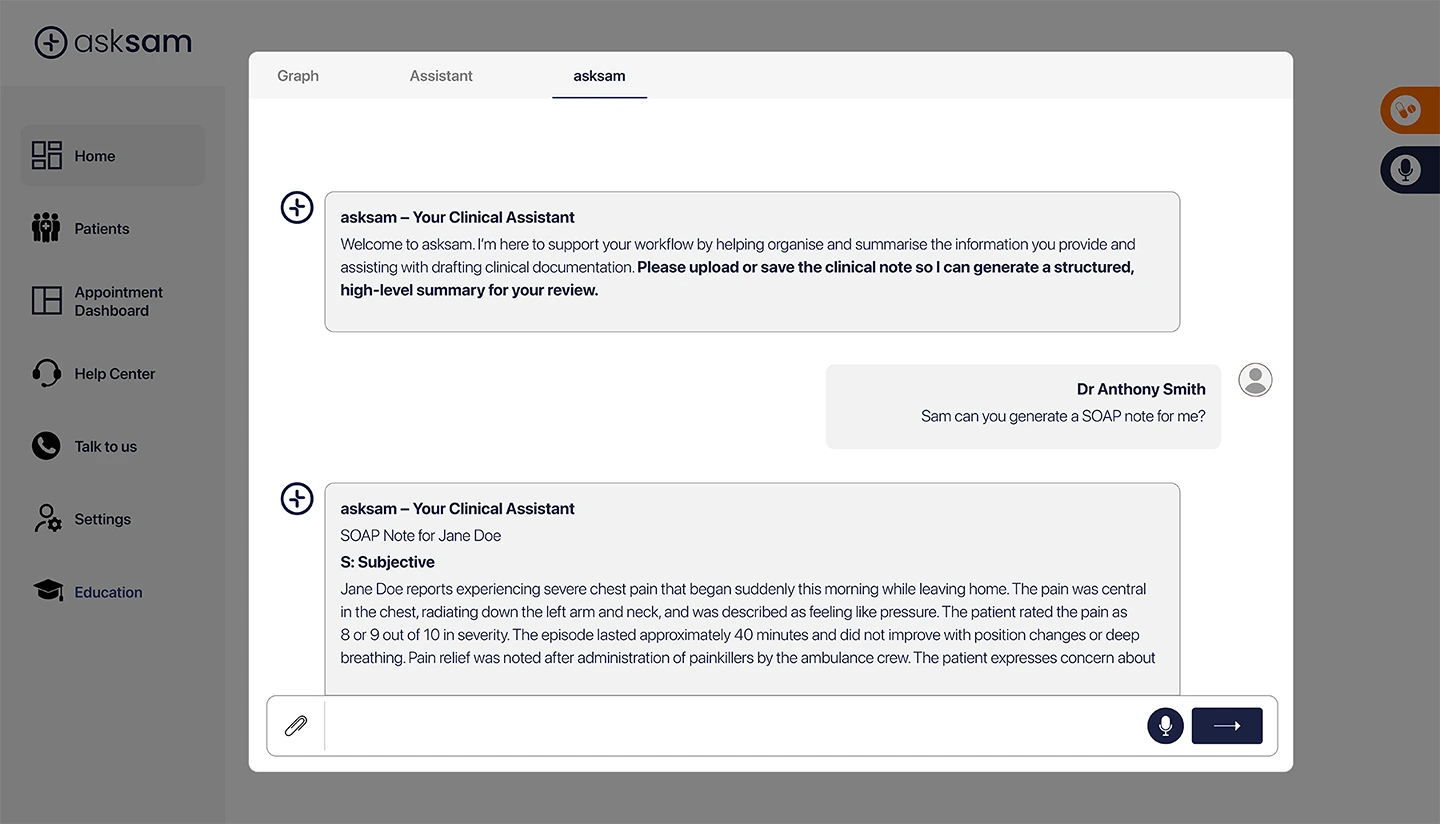

Beyond a Scribe: A True Clinical Assistant

Unlike traditional or open-source-based tools, asksam™ is more than a scribe, it’s your AI powered clinical assistant.

It supports the entire clinical workflow:

• Before consult: patient history summaries

• During consult: real-time documentation

• After consult: referrals, letters, and follow-ups

Comprehensive Clinical Capabilities

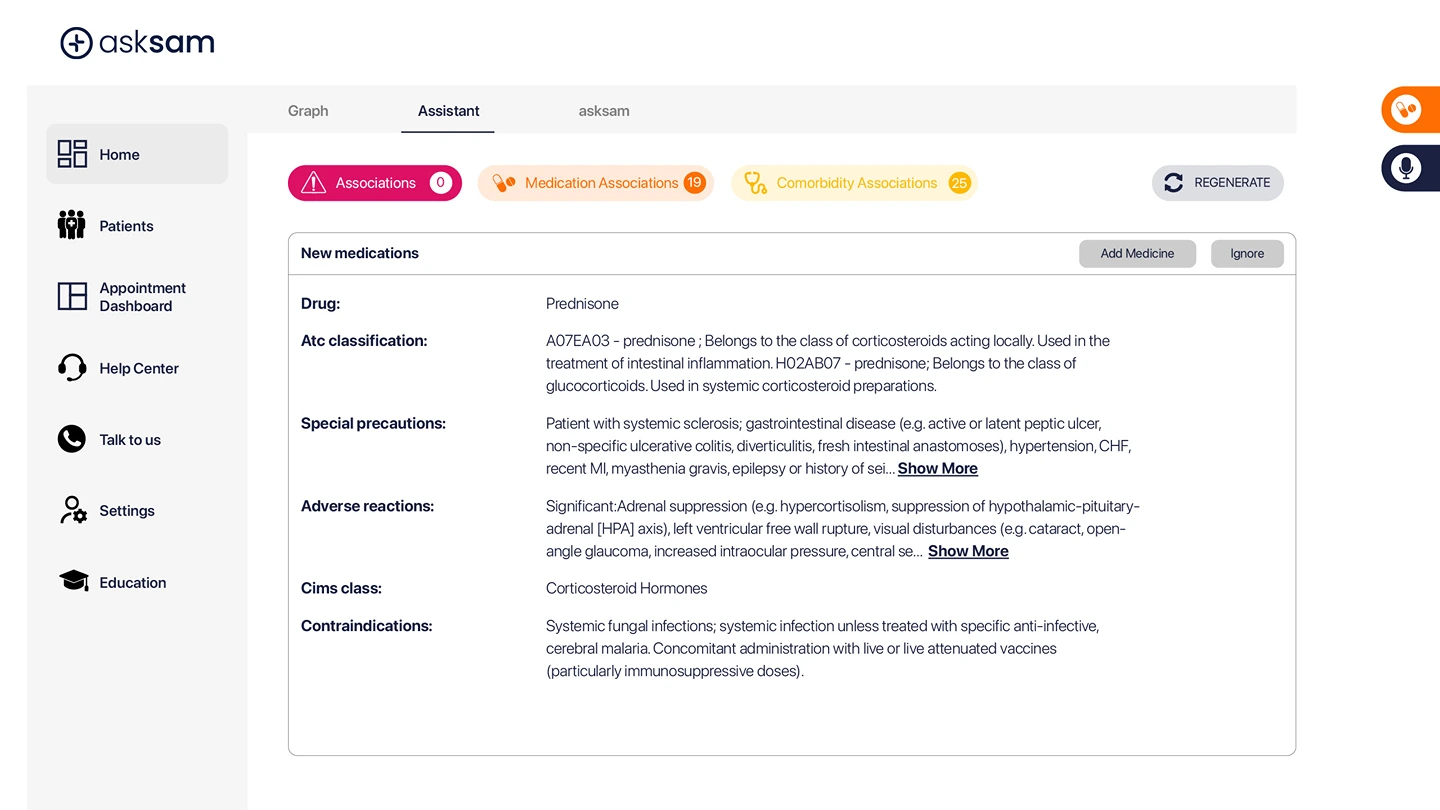

asksam™ goes beyond standard medical AI scribes by offering:

• Smart note generation (SOAP, progress notes)

• Unlimited document and referral creation

• Drug interaction alerts

• Patient-specific outputs based on full history

• Knowledge graph linking clinical data

Compared to open-model-based tools, it delivers:

• Lower hallucination risk1

• Medically derived suggestions

• End-to-end workflow automation

• Personalised patient responses

• Simplified access to medical literature

Designed for Australian Healthcare

asksam™ aligns with:

• Australian Privacy Principles

• Secure local data handling

• Clinical governance requirements

This makes it a strong choice for organisations seeking a medical AI scribe in Australia that prioritises compliance and trust.

Conclusion: Choose Safety, Not Just Convenience

AI scribes are no longer optional – they are becoming essential infrastructure in modern healthcare.

But the choice between open and closed source is critical.

Open-source AI offers flexibility – but at the cost of control, privacy, and consistency.

Closed-source AI offers security, reliability, and clinical alignment.

For healthcare organisations, the decision should be clear:

Patient data deserves the highest level of protection.

If you’re evaluating AI medical scribe software, prioritise systems built specifically for healthcare.

Explore how asksam™ can support your team with secure, reliable, and clinically aligned AI documentation.

References

Banerjee S, Agarwal A, Singla S. LLMs will always hallucinate, and we need to live with this. arXiv. Published September 9, 2024. Accessed March 31, 2026. https://doi.org/10.48550/arXiv.2409.05746

FAQs

1. What is the difference between open and closed source medical AI scribes?

Open-source scribes use publicly available AI models and may rely on external systems, while closed-source scribes operate in controlled, secure environments designed for healthcare.

2. Are open-source AI scribes safe for patient data?

They can be used safely with proper configuration, but they often introduce higher risks around data sharing, storage, and compliance compared to closed systems.

3. Why is hallucination a concern in medical AI?

Hallucinations can lead to incorrect or fabricated clinical information, which may impact documentation quality and downstream care processes.

What makes asksam™ different from other AI scribes?

asksam™ uses a closed-source architecture, verified medical data, and end-to-end workflow capabilities, making it more than just a documentation tool.

5. Can AI scribes replace clinicians or nurses?

No. AI scribes are support tools designed to reduce administrative burden, not replace clinical judgement or care delivery.