Jennifer Bechwati’s recent Channel 7 report on the rise of medical misinformation highlights a challenge clinicians are now confronting daily in their consulting rooms.

False health advice — increasingly delivered by AI-generated “experts” and fake doctors across social media — is spreading faster than evidence-based medicine can counter it. As the Australian Medical Association and the Royal Australian College of General Practitioners have warned, misinformation around vaccines, pregnancy, and treatment options is eroding trust in established medical guidance and placing growing pressure on frontline clinicians.

What is often missing from this conversation is an important distinction: not all AI is contributing to the problem. Some AI is being built deliberately to limit the spread of misinformation — not amplify it.

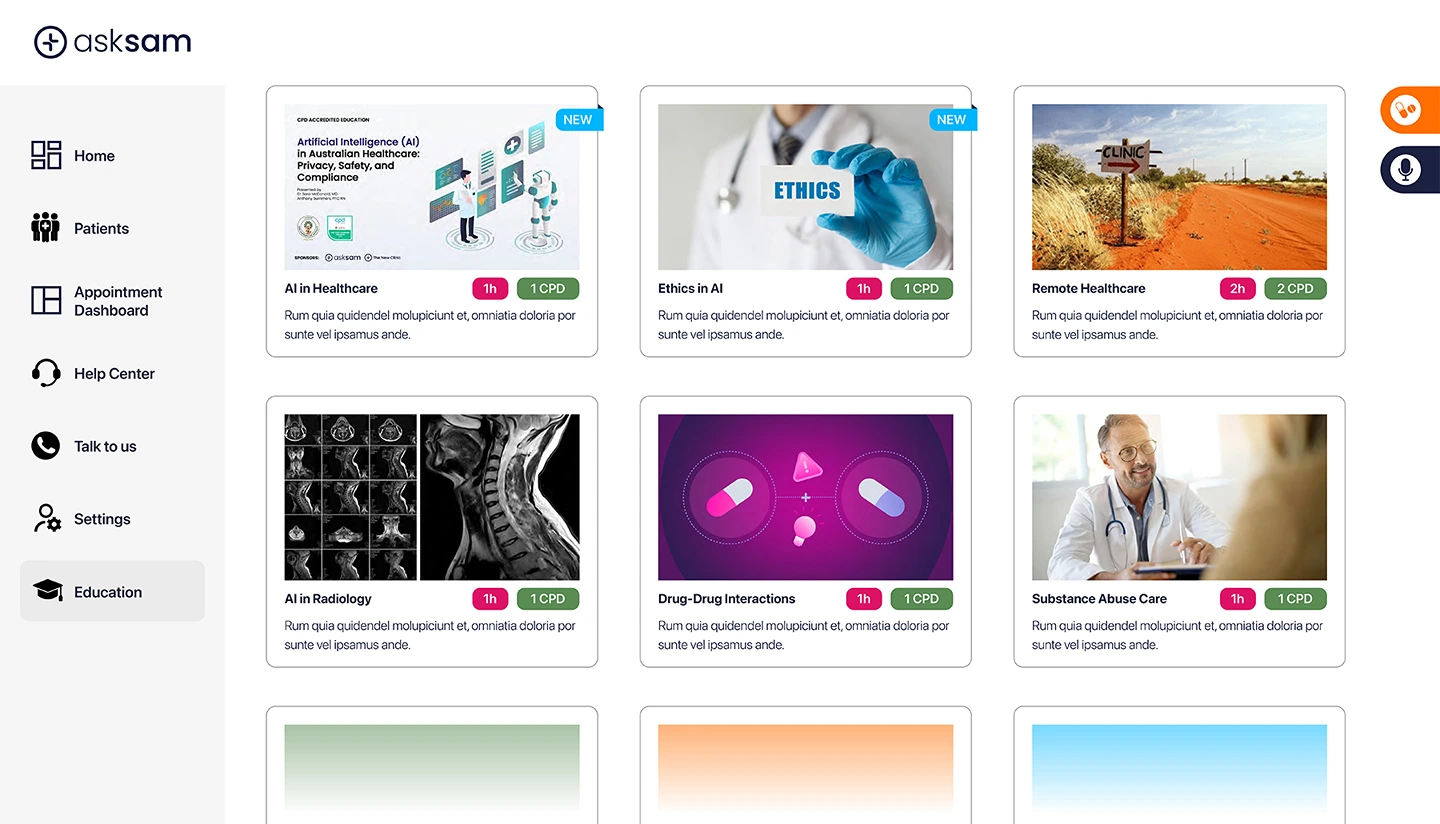

asksam™ was developed in direct response to this reality.

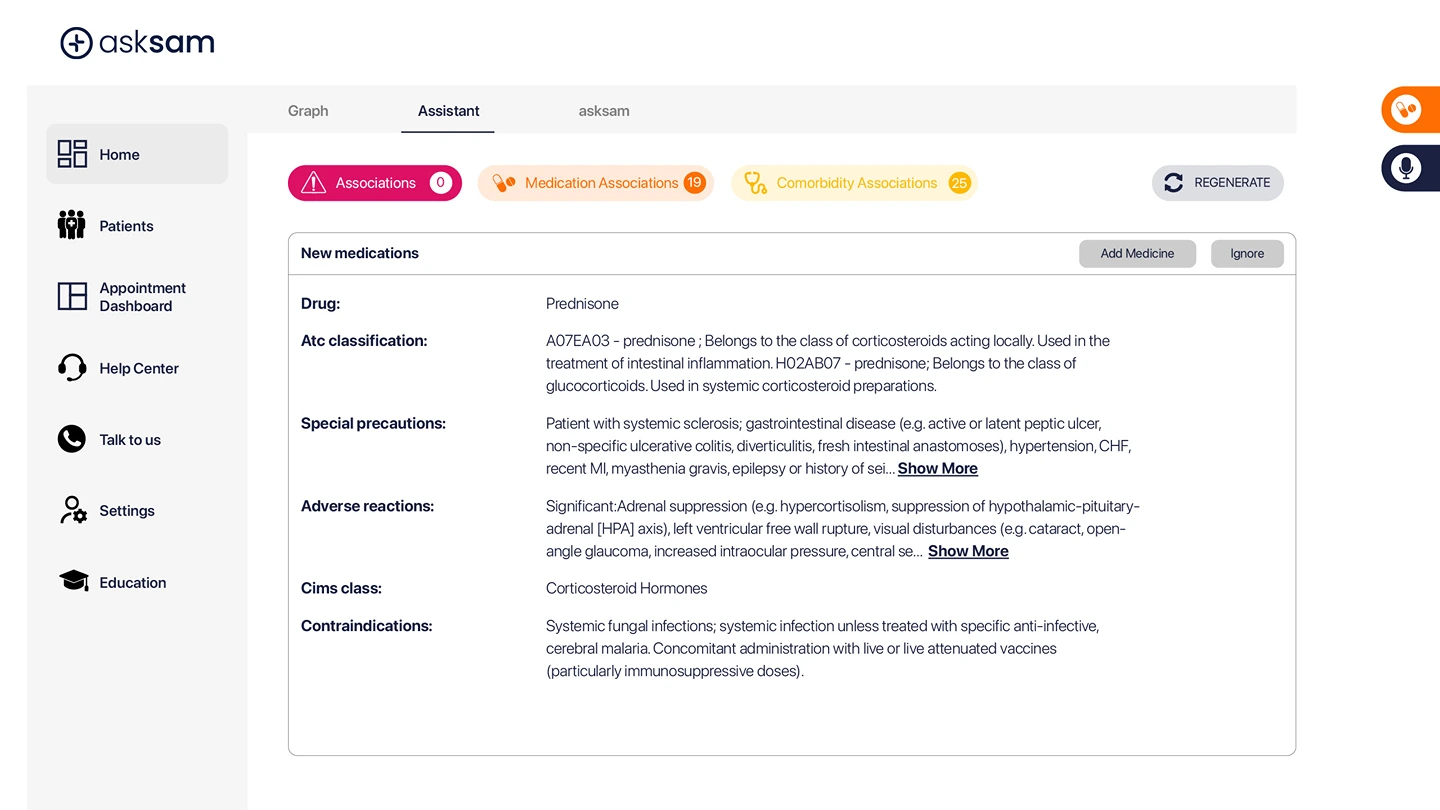

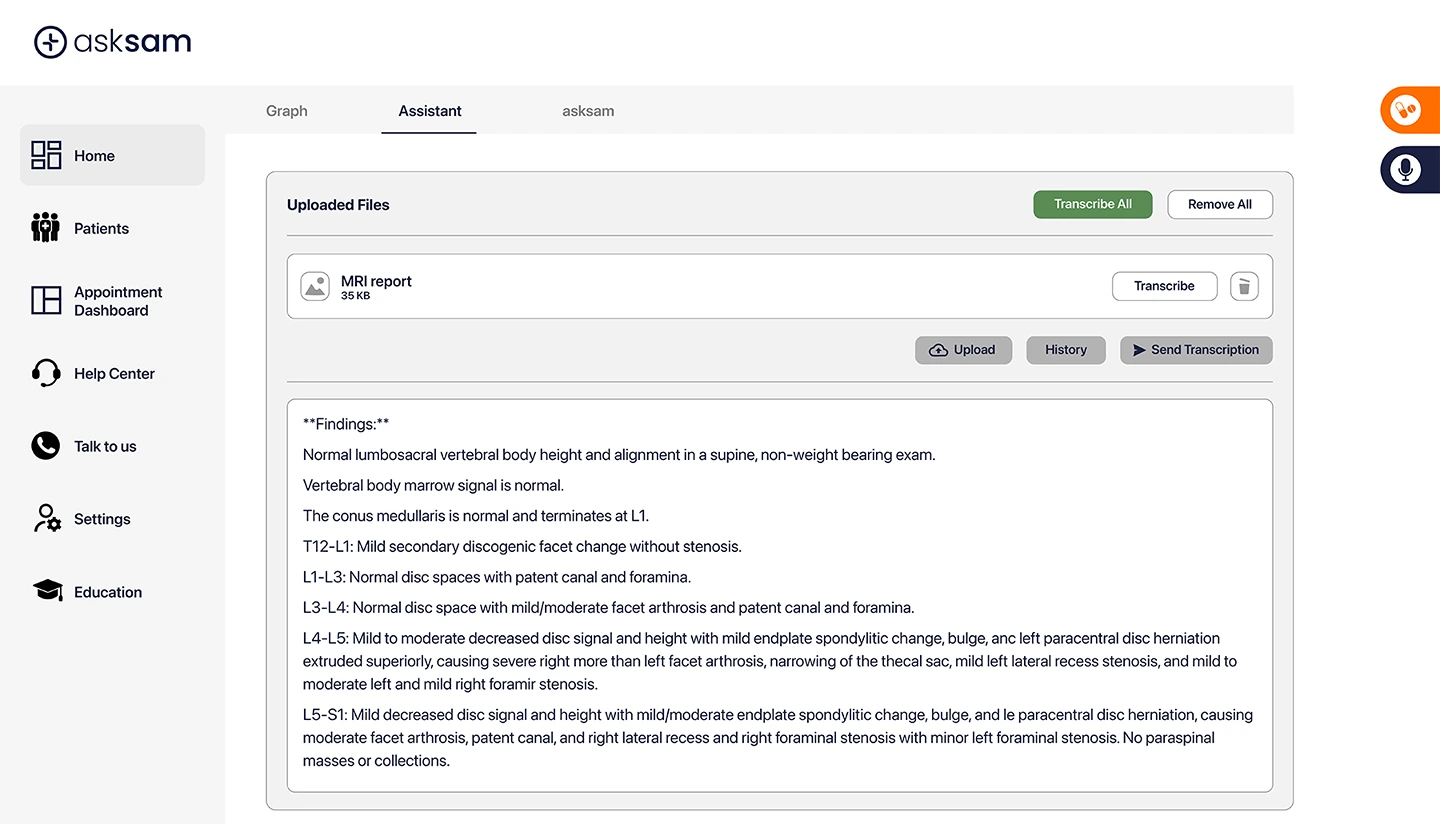

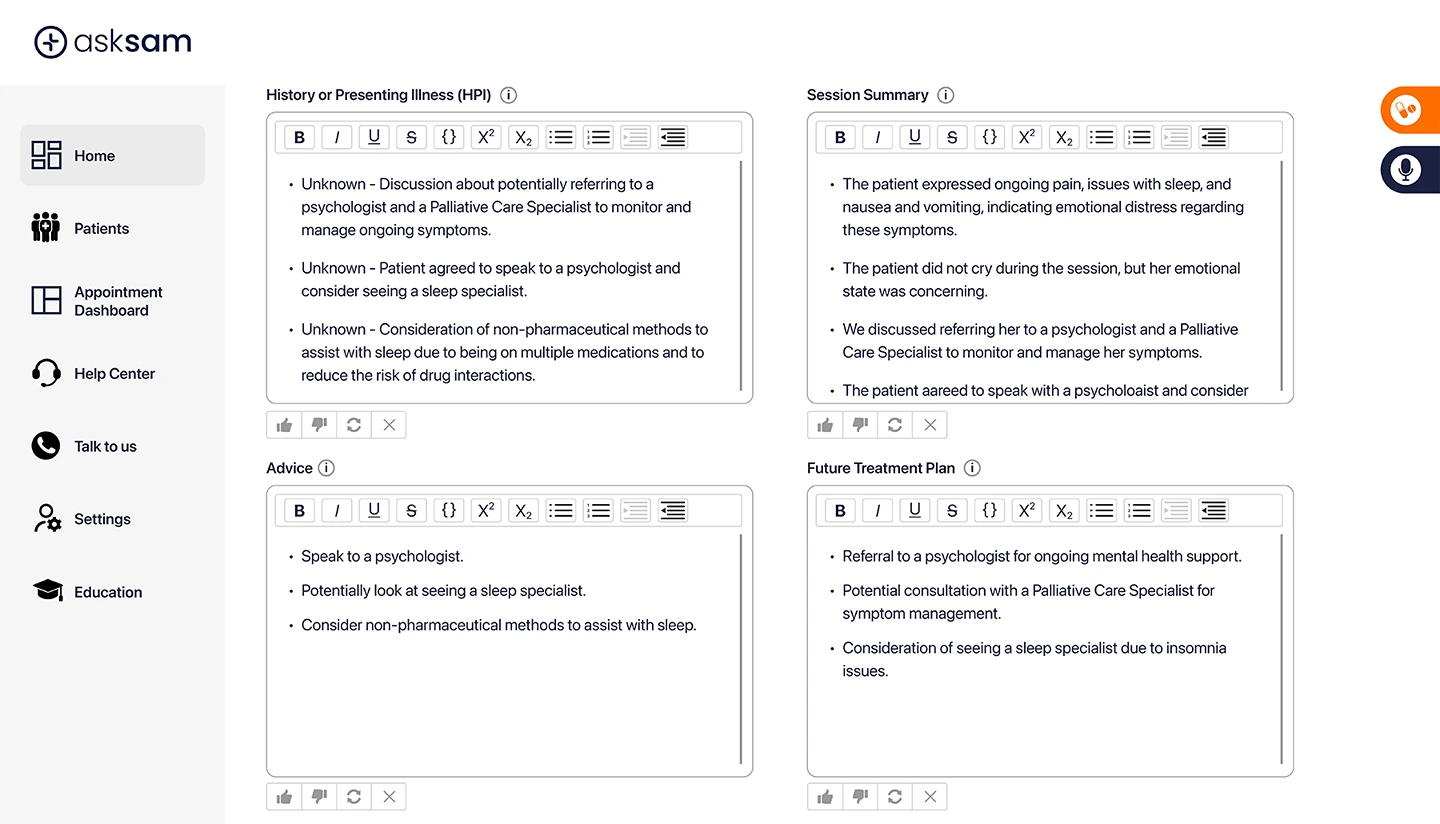

Unlike open, consumer AI tools that scrape the internet indiscriminately, asksam™ operates within a closed-source architecture. It does not rely on social media posts, influencer content, opinion blogs, or unverified websites. Instead, its medical knowledge is grounded in peer-reviewed medical literature, recognised clinical guidelines, approved product information, and trusted healthcare sources.

That distinction matters.

Today, many patients arrive at appointments armed with information designed for engagement rather than accuracy. Algorithms reward confidence, controversy, and speed — not nuance or clinical context. As a result, clinicians are spending increasing amounts of consultation time correcting misconceptions instead of focusing on care.

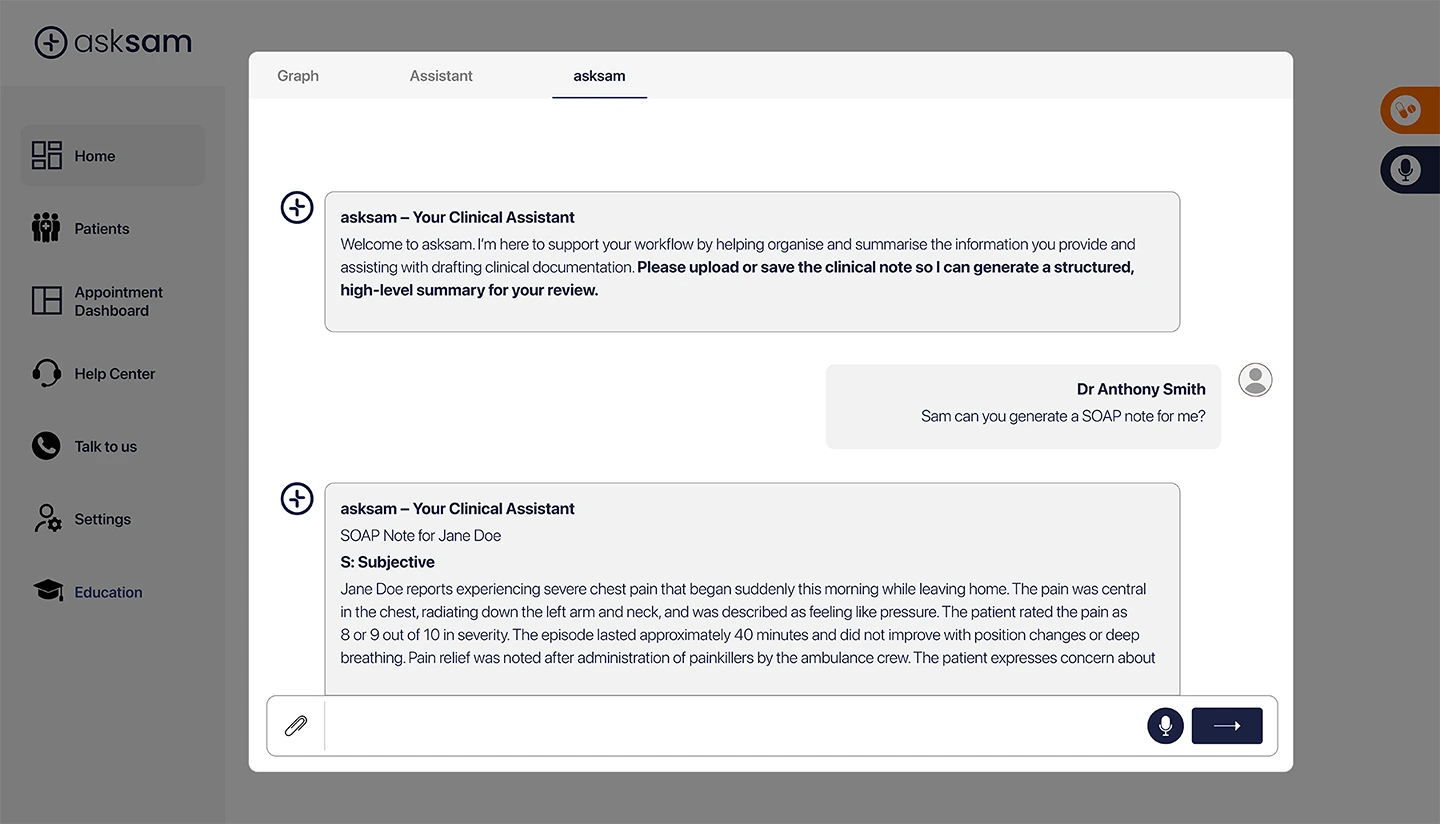

asksam™ is designed to work in the opposite direction.

Its role is to: • Filter out unreliable medical information rather than surface it • Align responses with established, evidence-based medical sources • Encourage appropriate clinical follow-up rather than self-diagnosis • Support clinicians — never replace them

In an environment where vaccine hesitancy has contributed to the re-emergence of conditions such as measles, the consequences of misinformation are no longer abstract. They are visible in delayed presentations, prolonged consultations, and heightened patient anxiety driven by conflicting information.

AI in healthcare should not add to the noise. It should help clinicians manage complexity, reduce cognitive burden, and guide conversations back to trusted medical evidence.

That is the role asksam™ is designed to play: more than a scribe — a medically grounded, accountable AI clinical assistant built to support clinicians and help counter misinformation at a time when the line between information and influence has never been more blurred.

asksam™ — your trusted AI-powered clinical assistant, designed to support clinicians and protect patients, with a human touch